Yes, You Can Do This At Home

OK, I was doing a GHCN V1 vs GHCN V2 comparison for “practice” while waiting for GHCN V3. And I decided I needed a direct comparison between the two. That meant the dT/dt method could not be used since it ‘starts time’ (and the baseline) in the present. And “the present” ends in 1990 for V1 and in 2010 for V2. What to do. What to do.

So I decided to use a ‘large chunk of common data’ for the baseline. The results of that are in “other hands” right now and may become a posting later. But along the way I realized that I had a way to validate dT/dt and/or show the “shape of the data” in a way anyone could do at home.

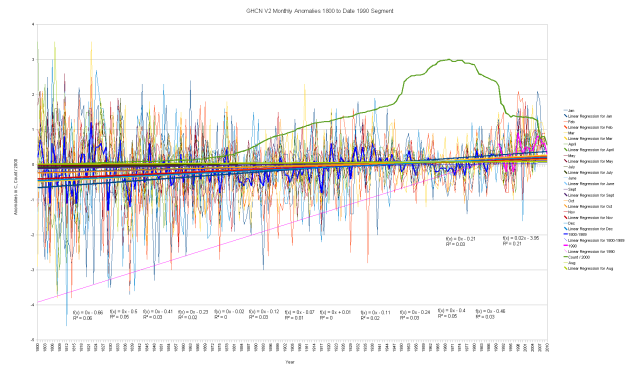

There is a very strong rise out of the Little Ice Age at the start of the thermometer series in 1701. Partly this is due to there being only one thermometer and it was in a cold spot. So I’ve chosen to “start time” in 1800 for this graph. This way it includes the “start of time” for GIStemp (1880) and CRU (1850) along with some context (1800-1850) so we can see if there was any cherry picking going on. It also includes 1816 “the year without a summer” just because I’m interested in it.

This one chart says so much. You can click on it to get a larger version with much more detail.

OK, I’d like to jump right into analysis, but first a couple of notes on the process.

It’s very simple, and yes, you can do this at home.

For each thermometer, I average all the data in any given month. That is the “baseline”. It then gets subtracted from each monthly data value to make that value an “anomaly” or “delta from the average”. These “monthly anomalies” are then averaged together for all thermometers that exist in any given year (Those data are the thin “hair” lines in the graph for each month.), at the same time an annual average is calculated by adding up the anomalies for all thermometers in a given year. You could do this in a spreadsheet.

(And I’d love it if someone were to do so and validate this. This is presently grotty FORTRAN code hacked together from preexisting bits in a particularly cavalier manner and I’ve not gone back yet to do my usual clean up and QA runs. Yeah, I think GIStemp code is starting to corrupt my style ;-)

FWIW, this graph fairly closely duplicates the dT/dt graphs, giving more confidence in the validity of both.

So What About The Graph?

Well, first off, notice that a trend line fit to the annual data is just about dead flat right up until about 1990. (That is the very thin blue line at near the zero line) The change of “duplicate numbers” or “modification history flag” on thermometers starts to hit in about 1986-1987 but those records have a matching entry from the prior “duplicate number” until about 1990 when “the reveal” is done and the older series of “duplicate numbers” are dropped. You could put the segment break at 1987 and get similar results, but I chose 1990 as that is when the data series are left to stand on their own.

At that point we see a dramatic increase in the slope of the trend line as “AGW suddenly begins”. But, IMHO, it’s not the world that’s warming, it’s the data…

That is the most striking thing I see. Just look at the slope of that thin Hot Pink trend line! We go from nearly flat trend up to 1990 (the thin blue line) to dramatic warming (thin hot pink line) just as the “modification history” or “duplicate number” change indicates a change of processing of the data. Hmmmm.

But wait, there is more…

Look at the period from about 1950 to 1980. It’s cold. But it gets cold not by having a lot of cold excursions (those monthly “hair” lines don’t go down very far) but by having no hot excursions (the “hair” barely gets above zero in several years). This, as they say, is odd… Some of those years were cold, but not the whole lot of them. There ought to be some warm excursions in some months. It’s almost like the data were tailored to have no hot excursions in those decades. Perhaps by way of the thermometers that were added coming into those years, then the other thermometers that were deleted when leaving…

Some Mathematical Expectations

Now, mathematically speaking, you would expect a broader range of excursions in the early years when there are few thermometers. If you look at the 1800 to 1940 part of the chart, you see both high and low excursions get compressed as the thermometer count rises. Not dramatic, but clearly happening. Then in that 1950 – 1980 part, volatility goes WAY down. It would be very interesting to do a statistical analysis of the degree of compression of range and ask if that is in keeping with the number of thermometer count change, and if not, it implies thermometer selection bias. To my eye, that’s what the chart says, but it needs a rigorous statistical treatment by someone other than me. Is that volatility reduction really justified by the effect of averaging a few more representative thermometers?

Though: it is worth noting that the volatility does start to rebound in about 1977 with a cold spike. I remember it “snowing where it doesn’t snow” in a couple of years around then. But I also remember a lot of “110 F in the shade and there ain’t no shade” years around the ’60s and ’70s. This makes that very low volatility, and no hot excursions at all, look very wrong to me. It was 117 F near Marysville one of those years, the hottest in the area ever and not reached again, IIRC. So those clipped hot peaks are very, very suspicious.

To me, this is NOT just a result of a few more thermometers. It looks like both MORE thermometers and LESS VOLATILE thermometers. Less volatility happens at warmer places (lower latitudes and elevations) and near the water. The same pattern of thermometer change over time that we’ve seen in the prior analysis of thermometer location changes over time. Our “thermometers on the Beach” problem is showing up in the volatility compression. Then a Very Odd Thing happens.

Industrial Revolution 1700s to 1990

As the thermometer count drops, we have a sudden rise in the trend to a spectacular warming. One Small Problem. The Industrial Revolution had been under way since “The Late 18th Century” (or sometime in the late 1700s). So we’ve had burgeoning CO2 production for over 200 years by then. And a Big Fat Nothing. Then suddenly, right when “Global Warming” becomes a popular point (and when NOAA / NCDC, Hadley CRU, and NASA / GISS get buckets of money and True Believers at the helm) we suddenly have a ‘hockey blade” form in the data. As someone recently said in another posting: “Hmmmm”…

Either CO2 has a 200 year lag in effect, or it’s not CO2 causing the data to change.

But Wait, There Is Even More

So take just a moment to look closely at the “hair” in that 1990 to date segment.

It doesn’t look at all like prior decades. In fact, it looks decidedly Non-Physical. There is an increased range, but only to the upside. Downward anomalies are substantially gone. Not even reaching zero. Yet in all prior time, the range is more or less symmetrical, though with a slightly larger “cold going” range (that is very physical, as hot air rises, so hot going anomalies are limited while cold air just pools around the thermometer. So cold going excursions can go further, at least in the real world.)

That the post 1990 range is so non-physical is, in my professional opinion, evidence of data tampering. Perhaps deliberate, perhaps out of error. But the data are now very non-physical. It has clear onset in time. That time matches a recognized change in the processing at NOAA / NCDC (when many / most stations increment the “Duplicate Number”) and match a date when new “Quality Control” procedures were introduced for the USHCN data set that will preferentially suppress cold going anomalies in that data set. Could something similar have happened to GHCN?

Further, 2009 had many cold locations, much snow around the world (it snowed on the French Mediterranean coast…) and was NOT a warm year. Yet the “Hair” for 2009 does not even make it down to the zero normal line. To me, that says these data are cooked. Not enough for a conviction (yet…), but enough to justify a detailed Forensic Investigation.

And yes boys and girls, you can try this at home.

Graph With Legend

I realized that the first graph does not have the legend on it. Here is a version with the legend.

UPDATE: Added Graph With Trends By Month

You really do need to click on this graph and get a bigger copy to see the equations and see the detail of the lines.

I’ve added fat trend lines to each of the monthly “hair” plots. The equations are at the bottom of the graph in “month order”. So if I’ve kept all this straight, you ought to be able to pick out, for example, the August lime green line and the 8th equation in the list and see that August has not warmed. At all. The other summer months are not much warmed either. The “warming trend” is all concentrated in the winter months.

Frankly, I wish this AGW were actually happening. I think more of use would be quite happy to live in perpetual Spring and Summer and skip a lot of the worst cold of winter. Especially the folks dying in South American cold right now…

I find it very interesting that the “hair” plunges below their collective trend lines rather dramatically around 1970 and collective jumps way above the trend lines in the last decade. Very unusual when compared to prior years. Just wrong for a natural process, IMHO.

These trends, though, argue that CO2 and tipping points is simply a fantasy. There is no tipping point to hotter or you would have summer months warming (there would be an acceleration of warming in the warmer months with positive feedback). Instead we have August going nowhere (perhaps slightly DOWN per the equation) with May and July almost as flat while June and Sept are nearly so. All the positive slope of merit is in Nov, Dec, Jan, Feb, and March. Funny stuff this CO2, only warms the winter months of the Northern Hemisphere…

And yes, I know that the data series has far more N.H. thermometers than southern hemisphere and that without areal weighting they will dominate the trend lines.

It would be particularly interesting to put up a graph of the monthly trend lines after 1990. Perhaps I’ll do that one for another posting. But I think it’s pretty clear that the “all data” graph has interesting variation from month to month in trend. Wonder how the CO2 theory can be warped such that August doesn’t warm?…

To me, that says these data are cooked.

Too true… and thank you for all your hard work in showing how this has been done… adding sites at airports and the coast… dropping colder one like Bolivia…

Lets take that as a given for the sake of argument ie. each year they fiddle with the data to get the required result.

Now, lets take a look at the station count:

They switch in some “hot” stations for 1950 to 1970 to reduce the temperature drop leading up to the 1970s iceage scare… this is a NET effect hidden in the bubble of new stations.

They start removing these cold stations from 1970 to 1998 to get the correct temperature rise… the rising solar cycle and the 1998 El Niño gives them a break so things stay fairly stable until 2007…

Now look at the station drop outs from 2007… this is hiding the decline… big time… at this rate there will be no stations left in a few years….

So where are we… that is not easy to say because the books are cooked… but my guess: the 1930s and 1940s were the century maximum… the long term trend since 1950 is downward… we had a slight reprieve with Solar Cycle 23… but we are now back on track… into the decline.

Another Nice one MR Smith, but of course “they” feel completely justified in Mangling, oops I mean “improving” the data based on their superior knowledge.

The first law of a salesman says: “Do not ever mention your competition”.

We all know, much more after “Climate Gate”, that all this was a scam. There is no need to mention it anymore.

Just a non-scientific, and probably “who cares” type observation: The increase in “official” temperature measuring sites across the U.S., really started a bit prior to the 1930’s, and can be attributed primarily to the rise of aviation, airmail, and airfields. Then, WWII brought even more rapid expansion of military airfields in the U.S., especially in the south, and we had even more “weather observations” available. After WWII commercial aviation similarly fueled the expansion of aviation weather sites.

To repeat what you have done, I need to access the same data. Links please.

@Mike Jonas: I used the GHCN data from NASA. Links are under the GIStemp tab up top, but I’ll dig around a bit and put a copy of the link here.

Ah, there it is:

ftp://ftp.ncdc.noaa.gov/pub/data/ghcn/v2/

I used the Feb 2010 copy, but the present copy ought not to be too much different up to 2009.

You want the “v2.mean” file.

@Rod Smith:

It’s actually an important observation. Most airports have transitioned from a grass field to acres of tarmac and jet exhaust over the last 75 years. That many of the thermometer stations were located there (and a very high percentage of those that survive to date…) is a fundamental warming bias via station selection in GHCN.

@All:

UPDATE: I’ve put a couple of more graphs in the posting, toward the bottom of it. One includes monthly trend lines that have an interesting pattern. August does not get warmer…

Thx. I have downloaded the data and can read it ok.

No promises as to when I can complete the task.

@Mike Jonas:

FWIW, under the dT/dt category on the right side of the page, you will find a bunch of examples of where I used a slightly different technique but applied it “by country”.

You tend to get very similar graphs.

So you could start by just picking one country, select out the records with that “country code” (the first 3 digits), and start with them. That gets it down to a couple of dozen thermometers for some countries, but still yields an interesting answer.

The USA has the most data, so I’d leave it for last…

Is it possible that what you have done is remove all traces of the “natural” warming in the last 200 years, and left only the AGW component? The trend in pink could easily be pulled back to start in the late 60s, and be consistent with AGW.

Not that I believe in AGW, but your work looks to me as if it is saying that the post-1980s data IS showing AGW. Of course, it could be (as I think) AGW caused by data selection, delection and “corrections”. Still, if you believe in the data (an IPCC hanging point), then I’d suggest you just gave the warmists a good speaking point ….

Say you what?

I have done a bit of work on the v2 data, and believe I have been able to quantify the amount of selection bias. See-

Click to access NOAAv2SelectionBias20100804.pdf

Please do not treat the above document as gospel yet, because I haven’t double-checked all my workings – but the results do appear to be very significant.

I would be keen to have someone go over the method I used, but I would prefer to supply the details “off-line” at this stage. If you can email me, I’ll reply with the details.

@Mike Jonas. Very interesting, and also being an Excel user I’m keen to know how too!

I’ve got the data loaded and expanded into station/year/month/temperature records (all 6m of them). But now I’m at the business end, I can’t quite see what you mean by :

For each thermometer, I average all the data in any given month. That is the “baseline”. It then gets subtracted from each monthly data value to make that value an “anomaly” or “delta from the average”. These “monthly anomalies” are then averaged together for all thermometers that exist in any given year (Those data are the thin “hair” lines in the graph for each month.), at the same time an annual average is calculated by adding up the anomalies for all thermometers in a given year. You could do this in a spreadsheet.

for any given year

Baseline#n = avg(all readings in mth n)

for station s month n

anomaly#s#n = reading – baseline#n

for month n

anomaly#n = avg[all s](all anomaly#s#n)

for the year

annual average = sum[all s, all n](all anomaly#s#n)

Presumably that last one should be an avg not a sum??

I haven’t done the arithmetic, but I don’t see how this can ever trend away from zero over a period of years.

I must be missing something.

PS. I am currently trying to find out whether my findings on selection bias could get published in a p-r journal.

Doug Proctor

Is it possible that what you have done is remove all traces of the “natural” warming in the last 200 years, and left only the AGW component? The trend in pink could easily be pulled back to start in the late 60s, and be consistent with AGW.

Actually, no. That would ignore the COOLING into the ’70s. We were not warming for almost the entirety of the industrial revolution. There is a clear rise out of a known abnormally cold period (the Little Ice Age) then flat for a lot of the industrial revolution later years, with a DROP during the most rapid growth into the early 80’s. Not consistent with AGW at all. Any this process does not remove any data nor any trend, so I’m not ‘removing’ anything natural.

Then the most telling thing about the graph, but what most folks seem to ignore, is the “Hair” part. Those monthly excursions. Suddenly we have a ‘clipping’ of the low going excursions of a type never seen anywhere in the record before starting right when a change of data processing happens with the change of mod-flag (duplicate number). So we have an overall rise similar to what has happened before, but with a “hair” profile that is just crazy. That compression of range is just bizarre, especially as the number of stations drops.

@Mike Jonas

For each thermometer, I average all the data in any given month. That is the “baseline”. It then gets subtracted from each monthly data value to make that value an “anomaly” or “delta from the average”.

Foreach STATIONnumber, Foreach Month, SUM (all data values). Divide Sum(all data values of S-M)/count of (S-M) data items.

That gives the average value of all the monthly data for any given station for any given month. Missing data flags are simply ignored and not counted. That is the “baseline” for Station-Month. (for example, L.A. in December average)

OK, now we pass through all the v2.Mean data again, and subtract the average from each data item (for any given station-month). If L.A. 1920 December were 22 and the average of all L.A. Decembers were 19.5, the result would be 2.5 as 1920 Dec is 2.5 degrees above the average of all L.A. Decembers.

These “monthly anomalies” are then averaged together for all thermometers that exist in any given year (Those data are the thin “hair” lines in the graph for each month.), at the same time an annual average is calculated by adding up the anomalies for all thermometers in a given year. You could do this in a spreadsheet.

So we take this set of “anomalies” and we sum all of them in EACH month in any given year. SUM for EACH YEAR (all anomalies of JAN as JANANOM, All anomalies of FEB as FEBANOM, all anomalies of MAR as MARCHANOM, etc.) and print that as the 12 points of the “Hair” lines for THAT year in the above chart.

Average those 12 points to get the annual average anomaly (the thick blue line early one, the pink line at the end).

OK, I’ll see if I can decode your version and match it.

I think you are using #n for “month” here and saying Baseline(of month #n) = ave(all readings in month #n) for A STATION “s” in that month.

I think that is what I’ve said.

for any given year

Baseline#n = avg(all readings in mth n)

for station s month n

Then calculate an anomaly of the data for the station “#s” in that month “#n” as the reading in that month minus the baseline calculated for that month. I’d only add that this is done BY YEAR, so you get a data point of anomaly for each station, for each year, for each month. The same total data size as the original data in v2.mean, but with each temperature replaced by an “anomaly” made from subtracting the average of all (station:month) items from THAT PARTICULAR (station:year:month) item.

anomaly#s#n = reading – baseline#n

for month n

So I’m not seeing the word “year” in that line…

anomaly#n = avg[all s](all anomaly#s#n)

for the year

annual average = sum[all s, all n](all anomaly#s#n)

I’m not sure how to read that last part. Going into this part, you ought to have a table with the same number of entries as v2.mean, and all you do is average the anomalies by year by month. That would give a table of years with 12 entries of one for each month that are the average of all station anomalies in that month in that year. (In fact, I make that table. From it, I make the graph). And the 12 entries in any one year can be averaged to make the year anomaly.

This is what gets plotted. By year, the average of all anomalies for all stations with data in any given month, the average of those 12 numbers for that year, and the total number of stations active in that year. ( I hope the sample report I grabbed for this is somewhat close to the actual one graphed above 8-)

Thx for the info. Will hopefully have time to work through it early next week.

EM

According to GISS it looks like GHCN v3 won’t be out until late this year:

Found that in the paper that GISS is trying to get published and you can read get from this link:

Click to access gistemp2010_draft0803.pdf

I’ve just gone through the Hansen 2010 pre-print mentioned. Though there is mention of using satellite data for ocean temperatures, I don’t see the UAH or RSS tags. Nor is it used over the land, or over the poles.

Is there a serious disagreement between these datasets that Hansen doesn’t even want to let us know they know, or is the UAH/RSS data referenced differently?

If this is the pre-print, this is what the warmist will trumpet as the answer to every skeptics complaint, i.e. that they checked and every adjustment and anomaly is correct and real.

Is this the paper to have a Watts et al counterpaper? To come out at the same time and go to all the newspapers at the same time as NASA officially releases their new, compelling work at a press conference and pre-Hansen-knighthood ceremony?

@Doug Proctor

The satellite data used in Gistemp is for SST’s and is not UAH or RSS, it’s a separate dataset called Reynolds OIv2. A good defintion of it is this:

You can read up about all the SST datasets here:

http://bobtisdale.blogspot.com/2010/07/overview-of-sea-surface-temperature.html

Gistemp Land Temperature analysis is strictly based on land based thermometers and interpolation to area where there are no thermometers. The biggest example of this is the poles. If you look at this post’s graphics here:

GISS does not have any actual readings in the gray shaded areas, and those areas are actually larger then shown because you can only turn down the amount of infilling from 1200km to 250 km.

I made a nice little Youtube animation that shows you the area these thermometers cover (out to 250km) over the entire span of the GISS record:

As to disagreement between Gistemp and UAH and/or RSS the answer is yes, matter of fact Gistemp is the outlier among all five datasets: HadCrut3, NCDC, RSS, UAH and GISS. This is due to their Polar interpolation. With that being said Gistmep’s biggest divergence is with the satellite record and it’s been growing bigger every year.

here you can see the divergence:

And no this is not the paper that is based on miss used SurfaceStation.org data. That paper is Menne et al 2010 and you can read about that here:

I haven’t read the complete paper yet but as of right now this is what GISS uses (Keep in mind GISS does not originate any of the “raw” data it analyizes, it all comes from NOAA)

The usage of Sats in GIStemp is minimal at best. It’s sort of a seasoning in the ‘optimal interpolation’ of all the other ocean surface temperatures that all get combined into a SST mush that then gets sort of grafted onto the land temps.

I’ve got a posting up about it from the way early days under the AGW and GIStemp issues topic…

Global warming is expected to have a primary effect of reducing the cold. More effect in winter, at night, and in higher latitudes.

It is the daily low temperatures that are rising more than the daily max.

@MikeN: Great news! Then there is no “tipping point” as we already have handled the highs just fine. And less cold means more crops growing faster, fewer deaths (as deaths from cold far outweigh those from heat), less fuel needed, more food and generally better wealth and well being for all.

Of course, since “Global Warming” predicts everything, it actually predicts nothing. And you will also need to explain how it has this magical effect with a step function onset in 1989-1992 and with differential warming of the cold months in different places (each country has different months warming and cooling, often the SAME month warming in one country and cooling in the one right next to it…) And a few other odd artifacts, but I’m sure it will be No Problem for the “Global Warming Theory Of Everything” to be expanded to cover those annoyances.

But I am so glad to hear that all we really need to worry about is less miserable cold winters. That’s a relief. (Never mind that it’s snowed like crazy in the ski resorts world wide in this last “warmest ever” winter while not setting any warmth records and while people from Mongolia to Peru were dying from the intense cold that wasn’t there…)

“Oh what a tangled web we weave when first we practice to …”